One of the cornerstones of military thought is the concept of force concentration – focusing a large portion of one’s own fighting strength on a small portion of the enemy’s. With different wordings (American doctrine, for one, refers to it as mass) this maxim is present in the Principles of War followed by all modern armed forces, and we can find various expressions of it as far back as Sun Tzu’s time.

The principle of concentration might seem self-evident to us at first. It does not take a great deal of analysis to realise that having a superior force is an advantage in any armed conflict – it could even strike us as tautological, in that any force that wins a battle is automatically proving to be superior to its adversary.

In practice, however, it is all much subtler. To begin with, “superior force” can mean a number of different things, from a strictly numerical advantage to better training or equipment. Even more important is the idea that this superiority, whatever we take it to mean, does not need to happen everywhere at once. A force that is generally inferior might, through planning and manoeuvre, obtain superiority at a specific place and time in order to achieve an objective of military value. Quoting Air Marshal David Evans:

Concentration does not just mean a massing of forces. It implies having forces so disposed as to be able to unite to deliver the decisive blow when and where required, or to counter the enemy’s threats or attacks.

Evans, David – War: a Matter of Principles (2000)

In other words, overwhelming the enemy is not exclusively a matter of numbers, but a consequence of being in the right place at the right time. To achieve success, a commander must strive to apply combat power when and where it is useful, while trying to avoid any engagement that is not favourable, or simply does not contribute towards a worthwhile purpose.

ANALYTICAL STUDY OF CONCENTRATION

When Frederick W. Lanchester presented his Square Law in his 1916 book Aircraft in Warfare, he envisioned it as a mathematical analysis of the principle of concentration . The same intention promoted the earlier (but classified until much later) work by J. V. Chase in 1902.

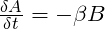

Arguably the best known mathematical combat model ever formulated, the Square Law is a set of two differential equations created to calculate attrition rates of two opposing forces, assuming all elements of force A can fire upon all elements of force B, and vice versa. They take the form:

Where A and B are the number of elements in each force, and α and ß, known as Lanchester coefficients, are the number of enemy elements that A and B (respectively) can put out of action in a time increment.

Combining both equations, we see that two opposing forces will be exactly equal in fighting strength (and hence would eventually destroy each other if neither retreated) if the following equality is met:

The last expression shows that, under these assumptions, a force’s fighting strength scales with the square of its numbers – and for that reason we refer to it as the Square Law.

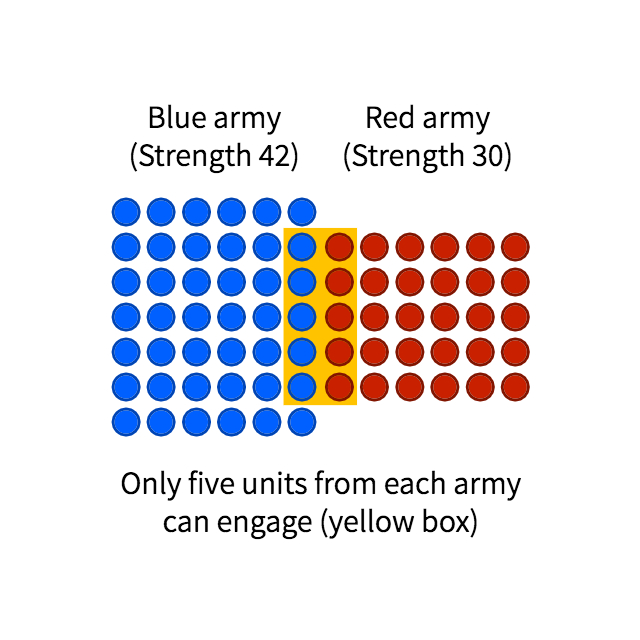

This conclusion is very often misunderstood as a statement that numerical superiority trumps all. Lanchester never believed so – how could he? Even a cursory glance at military history tells us otherwise. The truth of the matter is that a battle is never as simple as two forces uniformly inflicting casualties on each other over a period of time. Instead, we should look at it as a combination of smaller, discrete clashes between fractions of the opposing sides – conditioned by weapon range, terrain features, tactical manoeuvre, etc. It is only in these smaller clashes that the Square Law is expected to apply.

The principle of concentration dictates that we must aim to achieve superiority at this reduced, local level, if we are to prevail in a broader scale. In his original work, Lanchester provides two examples from history in which a smaller force was able to overcome a larger one by dividing it into fractions through manoeuvre, and then defeating those fractions separately – or in detail, in military terms: the French defeat at Würzburg in September 1796, and Napoleon’s victory at Arcole two months later. Both cases demonstrate, he argues, that the fighting strength of an army is greater than the sum of its parts. A mathematical analysis supporting this conclusion follows.

PROPORTIONS

As per the Square Law, a force of A units will have a fighting strength (let us call it FSA) proportional to the square of its number. That is:

If we split that same force into two halves, its fighting strength would be proportional to the sum of the strengths of its parts, or:

This would result in a reduced combat effectiveness. For example, a force 50 strong would have a strength proportional to 502 (or 2500). The same force divided into two groups of 25 would have an effectiveness proportional to 252 + 252, or merely 1250; one half, in fact, of its value as a cohesive unit.

We will now look at an application of this principle to the study of naval history, as found in Lanchester’s original work.

CONCENTRATION AND THE LINE OF BATTLE

From the mid 17th century, about a hundred years into what has come to be known as the Age of Sail, it became standard practice for fighting navies to do battle in a line formation. This seems a natural development, as ships were generally designed to fire abeam; the line ensured that no ship masked the guns of another.

(painting by Thomas Whitcombe)

Perhaps more importantly, in an era before the invention of wireless telegraphy, and with ship formation lengths often exceeding the nautical mile, the line was relatively easy to keep and manoeuvre, and could be led somewhat effectively from its middle; when in doubt, all a captain had to do was follow the ship ahead.

However, the limited traverse and range of naval guns in this period made it unlikely that two consecutive ships in the formation would be able to overlap their fire – and virtually impossible that three would do so. As a means of concentrating firepower, therefore, it was not the most efficient. Wayne P. Hughes Jr. tells us:

It is proper therefore to think of the column itself primarily as a means of controlling the force inherent in the admiral’s ships and only secondarily as a means of effecting concentration of firepower.

Hughes Jr, Wayne P. – Fleet Tactics and Coastal Combat (1999)

Under such restrictive tactical conditions, naval engagements (especially those between fleets of comparable sizes) would often prove indecisive, with neither side exerting true concentration of force. As Charles Henri d’Estaing would put it, naval battles often produced “more noise than profit”.

Historical literature frequently describes as ‘decisive’ those actions in which the conventional line was abandoned: Lanchester and Hughes refer to the Battle of the Saintes of 1782 (known to the French authors as the Battle of Dominica) in which Sir George Rodney defeated the Comte de Grasse by breaking the French line at three places, enveloping the resulting segments, and engaging them in detail. Similar dispositions were employed successfully by John Jervis at Cape St. Vincent, and Adam Duncan at Camperdown, in 1797. A year later, Lord Nelson carried the day at the Nile by separating his fleet into divisions, and doubling on the French van.

We could be tempted to believe that adopting these modern tactics was in every case desirable, and blame all who did not for a lack of awareness of what seemed to be a clear trend. But we must bear in mind that, in breaking the formation, concentration of fire was obtained at the expense of the ability to actively lead the fleet; the line of battle was, above all, an instrument of command and control, and without it there was little chance of issuing new orders or adapting to emerging situations. Anything that had not been carefully planned and drilled beforehand would be left, once the first shot was fired, to the discretion and best judgement of each captain. It was a gambit that not every navy could afford.

THE BATTLE OF TRAFALGAR

In his memorandum to the combined fleet at Toulon before Trafalgar, French admiral Pierre-Charles Villeneuve echoes this tactical outlook:

The enemy will not content himself with merely forming a line of battle parallel to ours, and so engaging us in an artillery combat wherein success falls often to the side that is more skilful, but always to the side that is more lucky. He will try to surround our Rear, to cut through us, and to bring to bear groups of his own ships upon those of ours that he has isolated in order to envelop and crush them.

James, William – Naval History of Great Britain, vol. III (1837)

His description of how the battle would play out is remarkably accurate, and reproduces Horatio Nelson’s own original plan almost to the letter. This tells us two things: first, the British strategy was really no surprise to anybody – we have mentioned already many precedents from which lessons had been duly learned. Second, there was not much Villeneuve could do about it, even if he knew in advance. A fleet had to be drilled and coordinated to a remarkably high standard in order to operate outside the constraints of the traditional line of battle, and the French admiral had no means of parrying the blow.

MODELLING THE BATTLE

Furthermore, the British could hardly have expected a clear victory by keeping the line. Nelson himself, in his 1805 memorandum, argues it would have been:

[…] almost impossible to bring a Fleet of forty Sail of the Line into a line of battle in variable winds, thick weather, and other circumstances which must occur, without such a loss of time that the opportunity would probably be lost of bringing the enemy to battle in such a manner as to make the business decisive.”

James, William – Naval History of Great Britain, vol. III (1837)

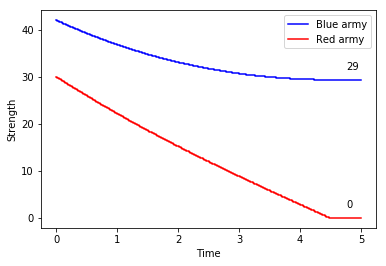

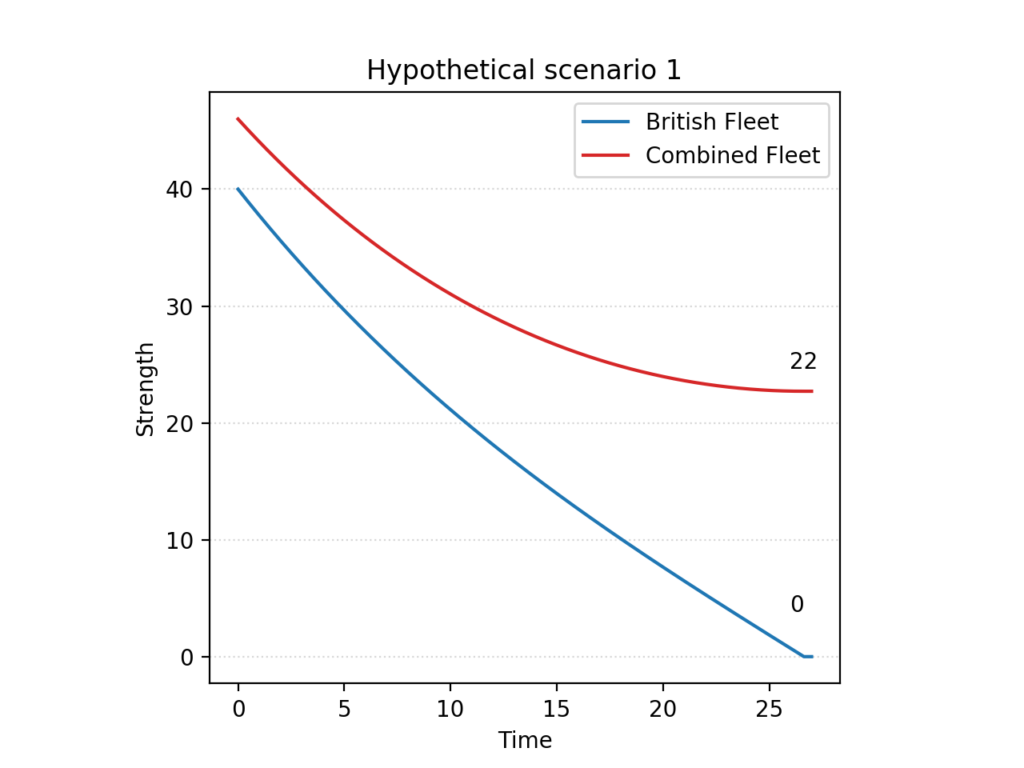

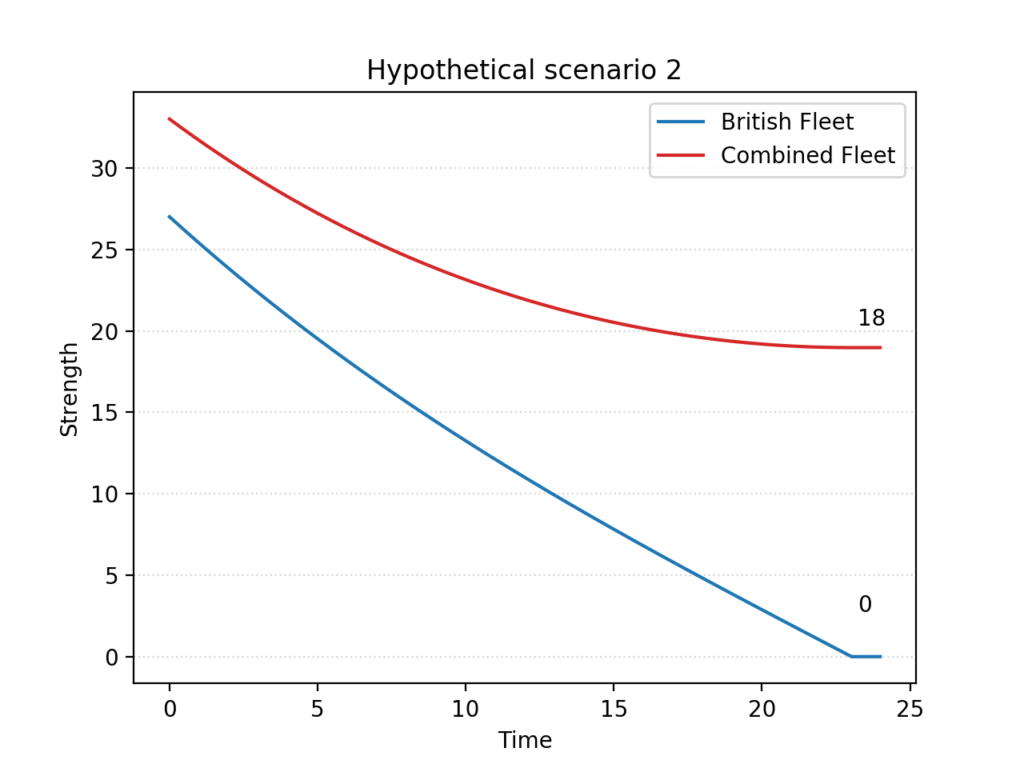

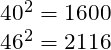

To the difficulties of manoeuvre we must add a discouraging numerical disadvantage, which would have been most apparent in a prolonged cannonade at long range. In Aircraft in Warfare, Lanchester uses his Square Law to predict the likely outcome had the old tactics been adhered to. The analysis begins with the hypothetical scenario of 40 British sail of the line facing 46 from the Combined Fleet, as originally predicted by Nelson.

The Franco-Spanish fleet would be expected to win, and by a wide margin, assuming the ships and crews on both sides to be equal. Just to achieve parity, the British fleet would have needed a qualitative advantage of roughly 32 percent.

If we look instead at the numbers ultimately engaged in the battle, the British disadvantage is even more dire. In the proceeding of the 20th International Conference on Technology in Collegiate Mathematics (ICTCM) of 2009, William P. Fox of the Naval Postgraduate School does the same calculation as Lanchester, but for the historical 27 sail of the line under Nelson facing Villeneuve and Gravina’s 33:

In this case, the model predicts that the British ships would have to fight almost fifty percent more efficiently to compete on equal terms.

It is worth noting that, as represented here, both scenarios show the opposing fleets just holding alongside at some distance, and pounding away at each other until the utter destruction of either, or both. No analyst would even begin to believe that, of course: the purpose of Lanchester’s equations in situations such as these is merely to estimate the relative advantage of one side over the other, not the final result of an engagement. In the absence of an obvious edge of manoeuvre that would prevent the enemy from withdrawing, a more likely outcome would be an indecisive exchange of fire after which, in the poignant words of the comte d’Estaing, the sea would “remain no less salty than before”.

THE SUM OF ITS PARTS

We know Nelson did not lose – and as Fox tells us, without a clear numeric or qualitative advantage over the Combined Fleet, the only option would have been a change in strategy.

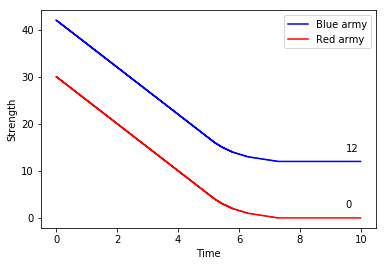

A simple but elegant explanation can be found in Lanchester’s original work, as he analyses the rough sketch of a battle plan found in Nelson’s original memorandum of 1805. The Square Law, we remember, tells us that the fighting power of a formation is proportional to the square of its numerical strength. Prior to the battle, the British admiral expected to be able to bring 40 sail of the line into the action, to the enemy’s 46. Looking at the numbers behind one of the charts we saw earlier, we note that each side’s relative strengths would be:

Assuming no qualitative upper hand to either side, the British fleet would be at a disadvantage of 516 – or roughly 32%.

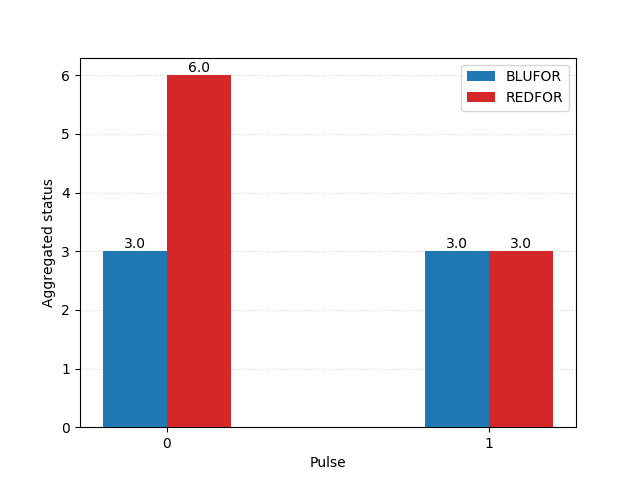

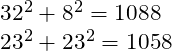

Rather than face these unfavourable odds in a direct clash, Nelson’s plan was to split the Franco-Spanish line into two. To achieve this, he would detach his eight fastest two-deckers to engage and occupy the enemy’s van, while the isolated rear was overwhelmed by the remaining 32 British ships. The analysis must now gauge the relative strengths of these smaller groups separately:

Which would give the British an advantage of 30 – just shy of 3% – over the Combined Fleet.

THE SQUARE LAW WITH REINFORCEMENTS

Alternatively, we could model the battle as a series of smaller, consecutive actions, in which different parts of each fleet join the fray at different times. This is not unreasonable, since Nelson’s plan was precisely to use his weather column (led by his own command HMS Victory) to isolate the van of the Combined Fleet, and keep it from supporting the rest of the formation in the first stage of the action.

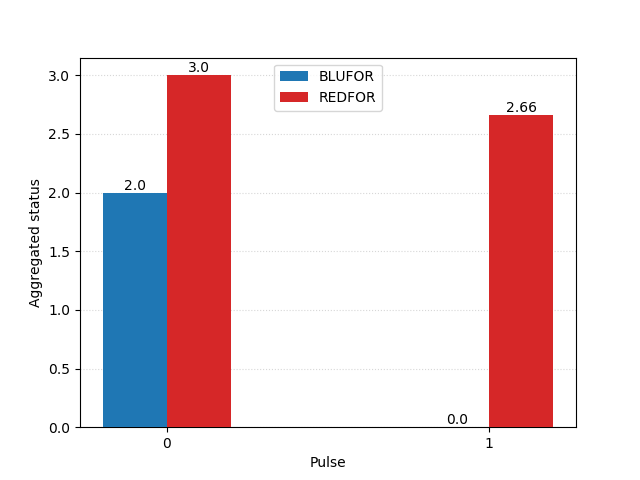

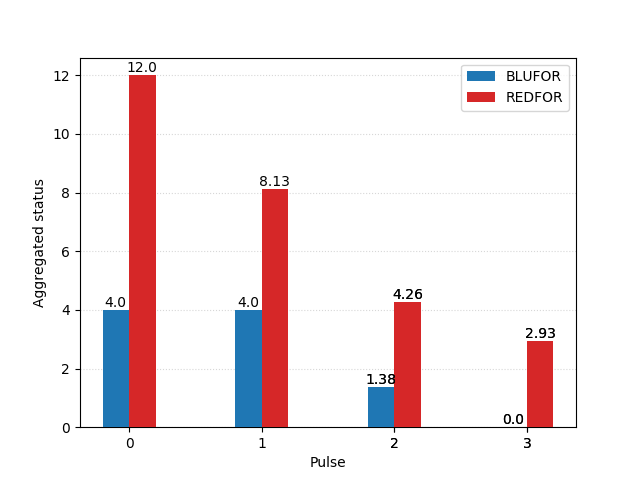

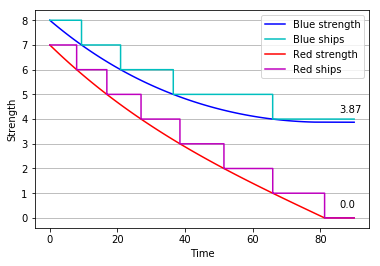

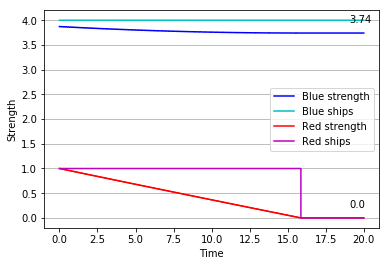

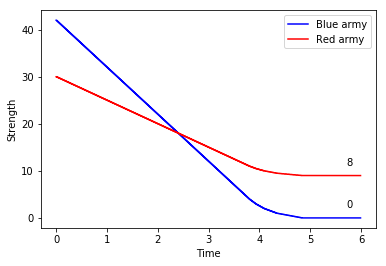

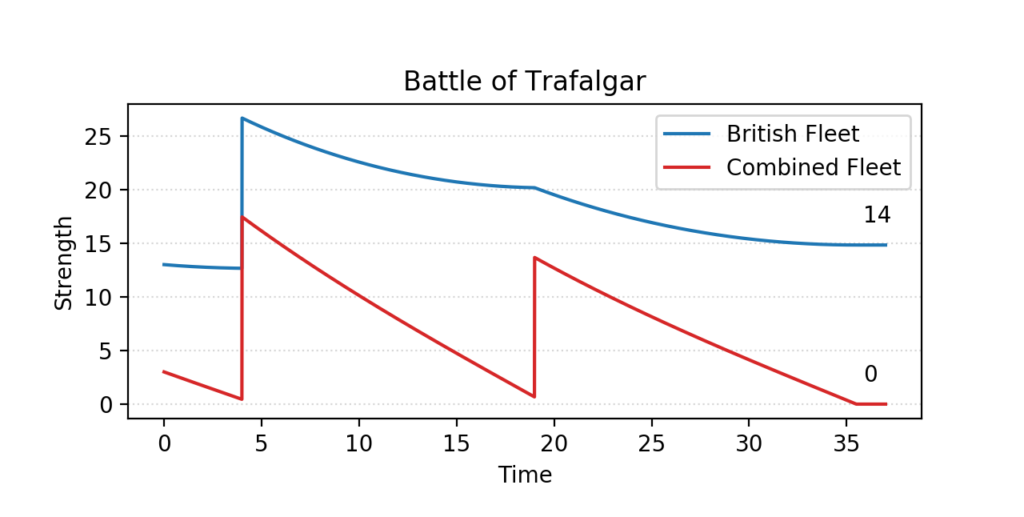

That is the approach chosen by W.P. Fox in the proceeding of the 20th ICTCM. In his model, Nelson’s fleet of 27 sail of the line is divided into two columns, sized 13 and 14. They sail through the Allied formation in two places, separating it into three groups of 17 (rear), 3 (centre), and 13 (van).

The British column of 13 first engages the enemy’s three centre ships, quickly overpowering them. They are then joined by the 14 ships in the reserve, and together they tackle the more numerous enemy rear. There, too, they gain the upper hand, and finally direct their attention to the last 13 sail in the Franco-Spanish van.

In this scenario, the British fleet not only seizes victory, but even does so with a comfortable margin – its remaining forces are equivalent to the combat power of 13 to 14 intact ships, which is about one half of its original strength; the Combined Fleet, in spite of having a slight numerical advantage at first, is completely annihilated.

READING INTO THE RESULTS

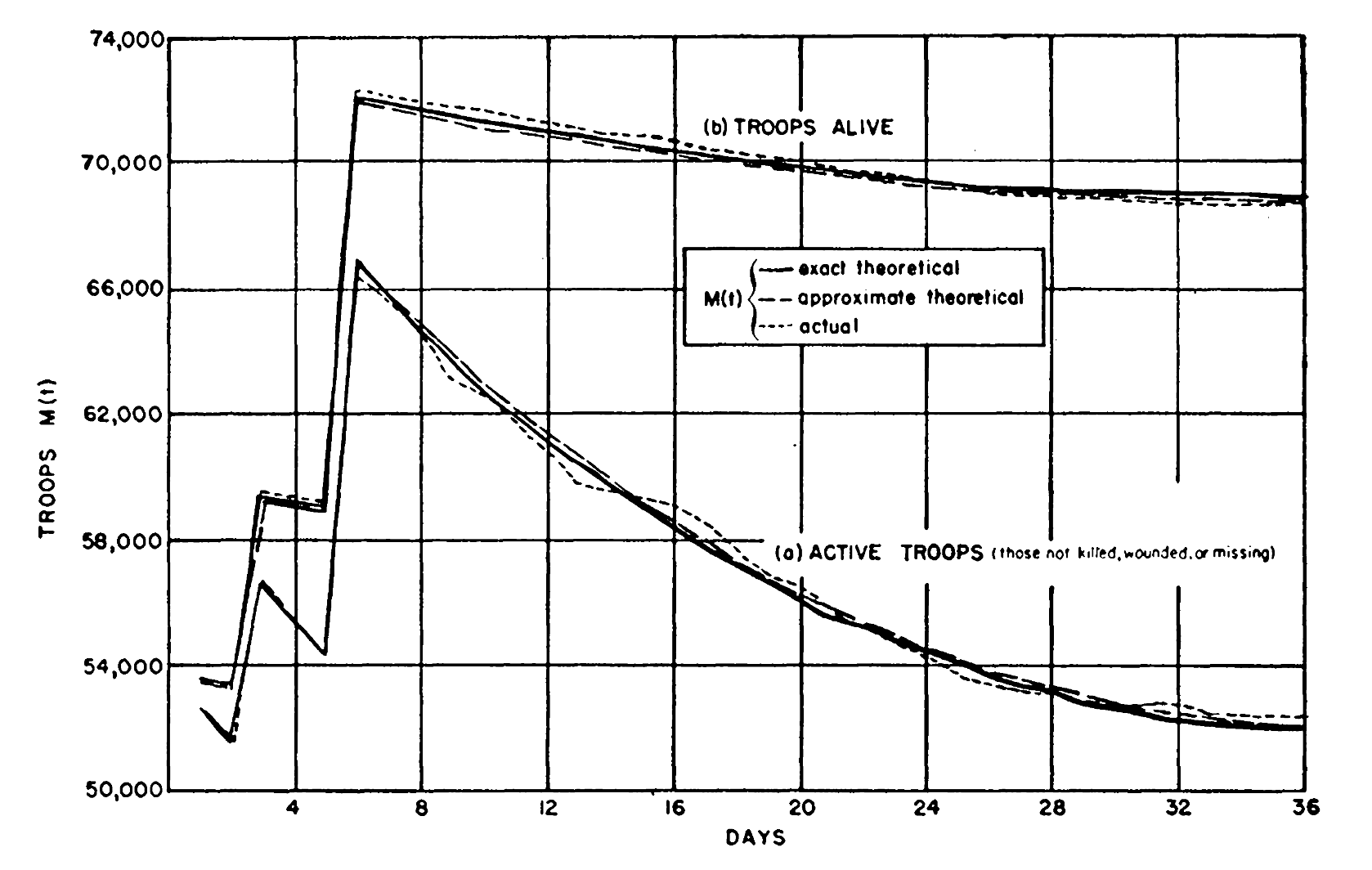

Fox’s model represents the battle in three distinct phases, which take place in order. This might give us the illusion that it is a high resolution model – meaning, it tells us in some detail what is happening when. It is important to remember that it is not, and in fact no application of Lanchester’s square law ever had that pretence.

What it does clearly expose is that numbers (of ships, of men, of guns) mean little without a context. A battle is a complex event, formed organically by numerous smaller clashes; numerical superiority, of course, is a clear advantage in any one of them. But it is within the domain of tactics to ensure that we have this advantage where and when it can lead us to a favourable result. Nelson could not have won without concentrating his forces intelligently; the judicious application of the same principle had granted him victory before, as it had Jervis, Rodney, and Duncan. As its author originally intended, the Square Law remains today an eloquent demonstration of the merits of force concentration.

GitHub

The Python implementations of the models used in this article for plotting simulated combat results can be found in the author’s GitHub page.